What Gartner's 2026 tech trends mean for product teams, not CIOs

Of Gartner's ten 2026 technology trends, four matter disproportionately for product builders: AI-native development, multiagent systems, domain-specific language models, and digital trust. AI-assisted development works only when grounded in structured context, not clever prompts. Multiagent systems are already in 80% of enterprise apps shipped in Q1 2026, yet 88% of agent pilots never reach production, because the bottleneck is product design, not model quality. Domain-specific models outperform general ones for targeted use cases, but only when a pre-development business case has set accuracy and cost thresholds. Trust is becoming a visible part of the product surface, and in regulated and European markets it is now a baseline requirement. Teams that win in 2026 will pick the two or three trends that intersect with their roadmap, not try to act on all ten.

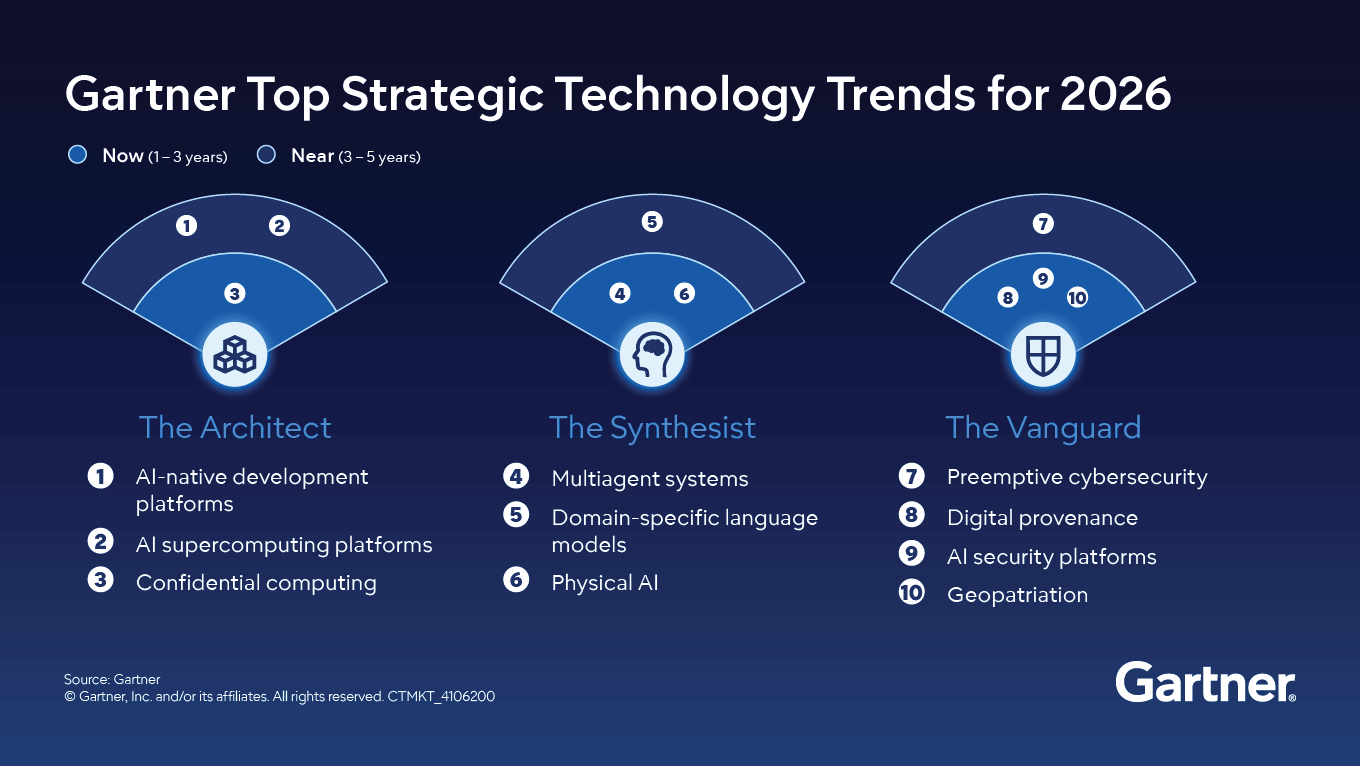

Gartner published its top strategic technology trends for 2026 last October, presenting ten trends grouped under three themes: The Architect, The Synthesist, and The Vanguard. The recap wave that followed was predictable. Within weeks, dozens of consultancies and vendors had published their own breakdowns, each walking through the same ten trends with broadly similar commentary aimed at the same audience: enterprise CIOs.

Six months in, that audience has had time to react. Roadmaps have been updated, budgets reallocated, and some of the more speculative trends have already drifted from the conversation. This is a useful moment to revisit the report, because the noise has cleared and a different question becomes interesting: what does any of this mean for the people building digital products, rather than the people governing enterprise IT estates?

The question matters more than usual because the gap between AI ambition and AI delivery is widening. RAND's 2025 analysis found that over 80% of AI projects fail to deliver their intended business value, twice the failure rate of non-AI IT projects. MIT's NANDA research, published in mid-2025, reported that 95% of generative AI pilots produce zero measurable return, a figure that has since been challenged on methodology but whose underlying pattern is consistent with other data. S&P Global tracked the abandonment rate jumping from 17% in 2024 to 42% in 2025. Deloitte's 2026 State of AI in the Enterprise report, based on a survey of more than 3,000 leaders, reframes the same problem more usefully: only 25% of organisations have converted 40% or more of their pilots into production systems, and only 34% are genuinely reimagining products or business models around AI.

Adoption is climbing. Execution is falling behind. The technology is rarely the limiting factor in any of this. The problem is that teams adopt trends without translating them into product decisions.

The CIO framing of the Gartner report speaks to a specific reality of legacy systems, multi-year transformation programs, and large vendor portfolios. Most product teams, especially those inside startups, scaleups, and corporate innovation groups, are not in that position. They are deciding which feature to ship next quarter, how to keep their AI costs predictable, and whether their architecture will survive the next funding round.

Read from that perspective, four of the ten trends matter disproportionately, and the implications are not the ones the original headlines suggested.

Source: Gartner

AI-native development platforms: smaller teams, different skills

Gartner predicts that by 2030, 80% of organizations will evolve large software engineering teams into smaller, AI-augmented teams. For an enterprise CIO, this is a workforce planning question. For a product team, it is already the operating model.

The interesting shift is not that AI writes code. It is that AI changes the shape of the team and what counts as productive work inside it. Earlier this year, one of our internal teams ran a hackathon to test how far AI tooling could take a small group of developers in three days. The business team had prepared around twenty-five documents describing the product: personas, journeys, business rules. The first instinct, which is also the most common one, was to skip most of that documentation and start prompting. Three days later, the team had produced more than 100,000 lines of generated code. It barely worked. Parts of three steps in a seven-step user journey were implemented, and two developers spent another week stabilizing the code enough to demo a flow that resembled the intended product.

The volume of output looked like productivity. The codebase itself was fragile, and small changes meant starting new prompt chains rather than improving the existing implementation. The lesson from that experiment, which has now run through several iterations, is that AI-assisted development becomes reliable only when it is grounded in a structured context: clear product documentation, explicit technical constraints, and short iteration cycles. Better prompts do not produce better systems. Better context does.

That conclusion changes who is valuable on the team. The bottleneck moves from typing speed to judgment, from how fast someone codes to how well they can specify, constrain, and review what the model produces. Roles that used to be sequential, designer to engineer to QA to DevOps, are collapsing into more compact units where each person operates closer to the full stack with AI assistance, but only when the surrounding scaffolding is in place.

For product teams, the practical questions follow from that. Which parts of the development lifecycle benefit most from AI augmentation, and which still need senior human oversight? How do you maintain code quality and architectural coherence as generation speeds up? How do you onboard juniors when AI does the work that juniors used to learn from? How do you avoid the most common failure mode, which is generating impressive volumes of code that nobody can maintain? These are not abstract governance questions. They show up in pull requests every week, and the teams that have answered them deliberately are pulling ahead of the ones that have not.

Multiagent systems: the orchestration problem comes for product teams next

Multiagent systems sit at the centre of Gartner's Synthesist theme. The idea is that multiple specialized AI agents collaborate on complex workflows, each handling a slice of the problem. The enterprise framing focuses on automating internal processes such as procurement, IT support, or claims handling, where the agents replace or augment back-office work that used to require human coordination.

Product builders should pay attention for a different reason. Multiagent architectures are no longer a future category. They are already in most products. Gartner's data shows that 80% of enterprise applications shipped or updated in Q1 2026 embed at least one AI agent, up from 33% in 2024. A finance app that uses one agent to read transactions, another to flag anomalies, and a third to draft the user's monthly summary is no longer a research project. A customer support platform where a triage agent routes tickets to specialized resolution agents is becoming a competitive baseline rather than a differentiator. The technical building blocks are widely available off the shelf, and the architecture has moved from labs to roadmaps faster than most reports anticipated.

The harder problem, and the one that gets less attention, is what happens after the pilot. Industry analysis published in CIO reports that 88% of AI agent pilots never reach production. That is not a model quality problem. The agents work in demos. They fail when they meet real users, real integration constraints, and real edge cases.

This is fundamentally a product design problem dressed up as an engineering one. A multiagent flow introduces failure modes that single-agent or rule-based systems do not have. Users need to understand what each agent is doing without being shown a system diagram. They need to know when to trust an output and when to intervene. They need a way to recover when one agent in the chain produces something that downstream agents then build on. They need to see why an outcome happened, not just what the outcome was. Most current implementations punt on these questions and ship the multiagent flow as a black box with a chat interface in front of it. Those are the implementations that show up in the 88%.

That approach will not survive contact with skeptical B2B buyers. The teams that figure out how to make multiagent flows feel coherent and accountable, where the user can inspect, intervene, and correct, will have a real advantage. The teams that ship them as opaque automations will erode trust quickly, and erosion of trust in AI features is unusually expensive because users generalize from one bad experience to the entire product.

Domain-specific language models: the end of the generic AI feature

For most of 2024 and 2025, adding AI to a product often meant wrapping a general-purpose large language model in a thin interface and calling it a feature. Gartner's emphasis on domain-specific language models in the 2026 report signals that this approach is reaching its ceiling, and the data backs that up. MIT's NANDA research found that specialized vendor-led AI solutions succeed roughly 67% of the time, while internal generic builds succeed only around 33%. Domain focus and workflow integration are the strongest predictors of whether an AI initiative reaches production and stays there.

DSLMs are language models trained or fine-tuned on specialized data for a particular industry, function, or process. They deliver higher accuracy, lower cost per query, and stronger compliance posture than general-purpose models for targeted use cases. For sectors like healthcare, legal, finance, and regulated B2B software, this is no longer optional. A general model that hallucinates a regulatory citation or misclassifies a clinical document is not a feature; it is a liability that creates downstream remediation work.

The implication for product teams is a shift in how AI capability is sourced and scoped. Instead of defaulting to the largest available frontier model, teams need to ask which slice of intelligence the product actually requires, whether a smaller specialized model could do that job better, and whether owning the fine-tuning pipeline creates a defensible moat. For startups in particular, a well-tuned domain model on proprietary data can be more valuable than access to the latest general model that every competitor also has access to.

There is a discipline that has to come before any of these architectural decisions, though, and it is the one most often skipped. A pre-development business case for an AI initiative functions as a technical constraint document. The cost of what is being displaced, the accuracy threshold required to sustain that displacement, and the maximum allowable cost per unit of output together determine which model architecture makes sense before any model is evaluated. A system reaching 94% accuracy when the target is 99% is not nearly done. It is at a meaningful decision point about whether further investment is justified. Without those constraints defined upfront, accuracy targets become intuitive, infrastructure choices become arbitrary, and the decision to move to production becomes a matter of engineering preference rather than measurable evidence. This is the discipline that separates the 5% of AI projects that produce returns from the 95% that do not.

Digital trust as a product feature, not a compliance task

Three of Gartner's ten trends sit under the trust and security umbrella: preemptive cybersecurity, digital provenance, and AI security platforms. A fourth, geopatriation, addresses the same underlying concern from a different angle, focusing on where data and workloads physically reside in response to geopolitical and regulatory pressure. Gartner forecasts that by 2030, preemptive solutions will account for half of all security spending, and that enterprises neglecting digital provenance could face billions in compliance and sanction risks by 2029.

Read from a product perspective, the message is simpler. Trust is becoming a visible part of the product experience. Users, especially B2B users, increasingly expect to see where data came from, how an AI output was generated, who has access to what, and what happens when something goes wrong. Provenance, audit trails, and clear AI usage policies are no longer back-office concerns that legal handles in a separate document. They surface in onboarding flows, in admin dashboards, in vendor questionnaires, and in deal cycles.

In regulated environments, this constraint reshapes the product before any code is written. Working with clients in finance and other regulated sectors, we have built AI systems where data could not leave the customer's infrastructure, where credentials for hundreds of third-party accounts had to be managed within a private security perimeter, and where accuracy targets were defined not by what felt achievable but by what the business case required. A SOC 2 compliance requirement, for example, can determine that any AI system built for a particular workflow needs to run entirely within the customer's own infrastructure. That single decision, reached before any model evaluation begins, eliminates most hosted model architectures and shapes everything that follows: which open-weight models can be considered, what fine-tuning pipeline is feasible, how credentials are passed and destroyed within the security perimeter, and what the realistic cost per transaction looks like.

This is what geopatriation looks like at the product layer. It is not just about choosing a sovereign cloud provider for compliance reasons. It is about designing the architecture so that the product can clear a security review at all, in markets where increasing numbers of buyers are asking those questions. For European product teams in particular, where regulatory and data sovereignty pressures are intensifying, this is becoming a baseline requirement rather than an enterprise edge case.

The practical implication for product teams is that trust signals belong on the design surface, not the compliance checklist. Showing the user which model produced a result, how confident it is, and what data it relied on is a feature. Making it easy for an enterprise buyer to verify the integrity of your software supply chain is a feature. Designing the system so that data residency and access controls are visible and configurable is a feature. The teams that internalize this early will have a much shorter sales cycle when their buyers' procurement teams start asking the questions that Gartner is signaling will become standard.

What this actually means in practice

Gartner's report is, as always, a useful map of where enterprise priorities are heading. The risk for product teams is reading it as a to-do list and trying to act on all ten trends at once. That is not a strategy, and the failure-rate research suggests it is actively counterproductive. The vast majority of AI pilots that produce no measurable return are not failing because the teams behind them lacked ambition. They are failing because the ambition was not connected to a specific product decision, a specific business case, and a specific accuracy and cost threshold.

A more useful approach is to pick the two or three trends that intersect with the product's near-term roadmap and treat the rest as context. For most digital product teams in 2026, that intersection is somewhere between AI-native development practices, multiagent product experiences, domain-specific intelligence, and visible digital trust. Everything else on the Gartner list is worth tracking, but not worth restructuring around.

Working out which trends actually intersect with a specific product is the harder part of the exercise, and it is rarely something a team can do well in a single planning meeting. It is the kind of question we tend to work through with product teams during a Product Design Sprint, where the goal is to align on what the product needs to do, what the realistic constraints are, and which technology decisions matter for the next twelve months versus the next thirty-six.

The teams that win in 2026 will not be the ones that adopt the most trends. They will be the ones that understand which trends change the product itself, and which only change the slide deck.

Navigate AI adoption with our assistance

If you are evaluating an AI investment and want to pressure-test the business case before committing to a build, that is a conversation we are set up to have.